Unless you are using the analogue output from a device, the digital processing will have little bearing on the sound, provided there is adequate processing and memory to ensure an uninterrupted stream of data. RAM speed and latency can only have an effect on processing speed, nothing else.

If you are using a USB or coax S/PDIF interface to a DAC, there is a small possibility that conducted noise might get into the DAC audio, but a well-designed DAC should provide effective isolation of the input.

If you are using an optical S/PDIF interface, there is no electrical path to conduct noise from PC to DAC via the signal path; it would have to be radiated from PC to DAC, or conducted via the mains supply. If you provide a sensible physical separation, and the DAC is adequately protected against radiated and conducted susceptibility, you should not have problems.

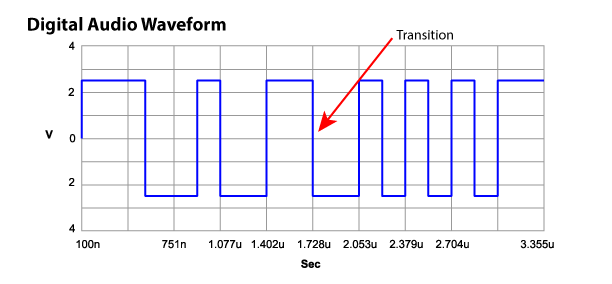

The last mechanism by which noise might be passed to the DAC is due to jitter in the signal timing of the USB or S/PDIF. Such jitter should be removed by the clock recovery, data sampling & FIFO buffer in the DAC. It is the sample clock to the DAC chip that determines the DAC sample timing, not the digital input stream. This DAC sample clock should be provided by a low phase noise source, such as a crystal oscillator.

The fact that there is no flow control between source and destination in the simple protocols means that it is possible for data overflow or underflow to occur, due to the use of different oscillators at each end; data is sent at one rate by the source, and consumed at a different rate at the DAC. But this will be mostly dealt with by use of a FIFO buffer in the DAC, and the fact that crystal oscillators have a pretty good tolerance. Data overflow or underflow would result in a missed sample, or a duplicated sample; for instance, the VoIP protocol provides a mechanism to drop, or repeat frames as necessary, since the source and destination clock rates may be more significantly different.

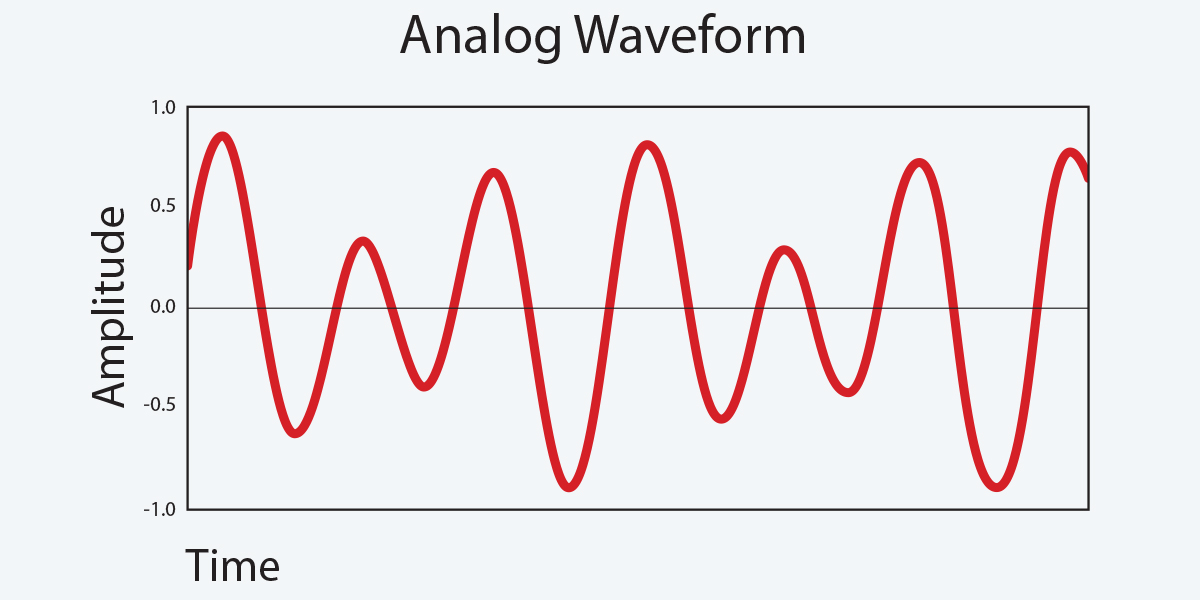

If you are using an analogue output from a PC, laptop or tablet, you might consider using a lead fitted with an RF suppressing filter, to prevent RF noise being conducted along the signal cable. The audio circuits should also be designed such that they will not respond to RF, usually by a simple low-pass filter on the signal input. They should also be designed to prevent radiated susceptibility, preventing RF injection into the circuit.

RF injection can cause problems if it hits a rectifying element in the design, which will perform amplitude demodulation to baseband (audio frequencies), the classic being mobile phone injection (GSM will give a 216.6Hz buzz due to the TDMA frame rate), or even more classic; dental fillings that receive radio stations...

If you are using WiFi or Ethernet streaming, there is almost no chance of conducted noise from the 'player' computer; the media stream is transported as a CODEC format, and reconstructed by the DMR. A badly designed DMR might be susceptible to WiFi or Ethernet noise, and noise generated by the processor performing the rendering.