Hak Foo

Active Member

As I understand it, normally amplifiers have to be built with (typically) pairs of output transistors, because one device can handle the positive voltages and one the negative. You end up either wasting a lot of energy running them to do nothing half the time (class A) or introduce switching noise cutting them off (class B/AB)

What would happen if you injected some DC into the signal to amplify, then removed it after amplification?

Instead of, say, a signal centered on 0 and ranging from -2v to +2v, it could range from 0 to 4v, centered around 2v.

Then you'd only need a "positive side" transistor to amplify that to, say, 0-30v centered around 15v. To get it back to a normal zero centered signal, a simple option would be to use a +15v reference as "ground" for the speakers.

This seems like it has some merit-- 50% fewer expensive output transistors, no switching distortion... but on the other hand you've got to keep the two offsets precisely tuned and if it goes wrong, you're running a huge DC offset by design. It also requires transistors that behave in a super linear way.

Has anyone ever tried a design like that? Or am I completely lost on how AC signals work?

What would happen if you injected some DC into the signal to amplify, then removed it after amplification?

Instead of, say, a signal centered on 0 and ranging from -2v to +2v, it could range from 0 to 4v, centered around 2v.

Then you'd only need a "positive side" transistor to amplify that to, say, 0-30v centered around 15v. To get it back to a normal zero centered signal, a simple option would be to use a +15v reference as "ground" for the speakers.

This seems like it has some merit-- 50% fewer expensive output transistors, no switching distortion... but on the other hand you've got to keep the two offsets precisely tuned and if it goes wrong, you're running a huge DC offset by design. It also requires transistors that behave in a super linear way.

Has anyone ever tried a design like that? Or am I completely lost on how AC signals work?

HH Scott LK60 DSC_0789

HH Scott LK60 DSC_0789

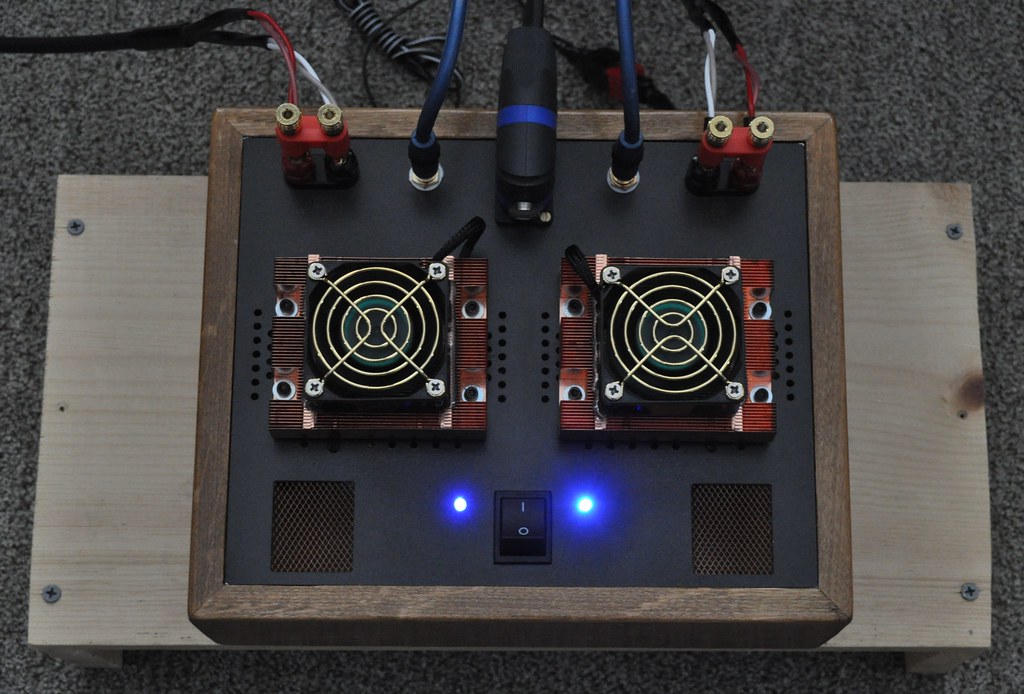

DSC_2871 (2)

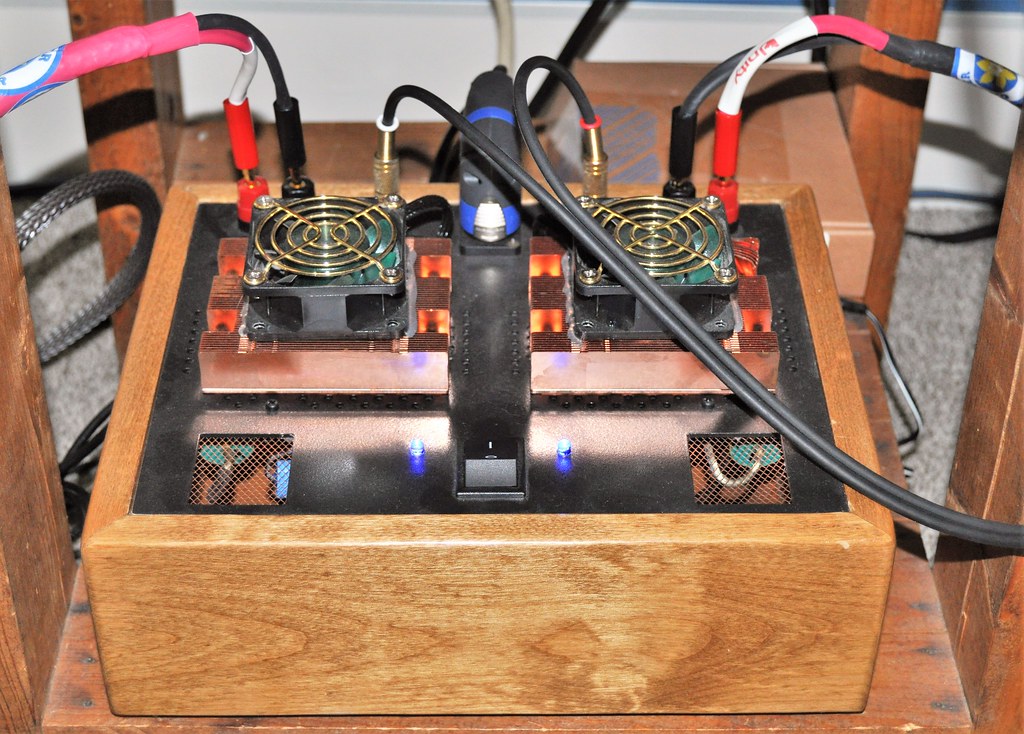

DSC_2871 (2) DSC_6923 (2)

DSC_6923 (2)